From Coursera, Introduction to Self-Driving Cars by University of Toronto

https://www.coursera.org/specializations/self-driving-cars?action=enroll

Self-Driving Hardware and Software Architectures

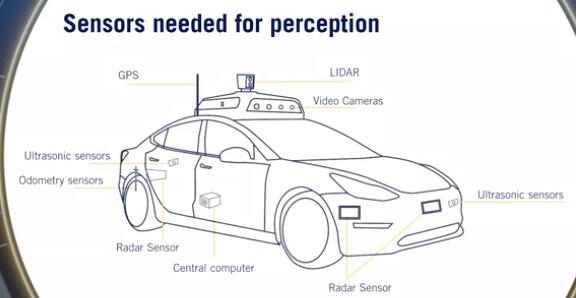

Sensors and Computing Hardware

Sensors types and characteristics

- Sensor Categorization:

- exteroceptive: extero=surroundings

- proprioceptive: proprio=internal

- Camera:

- Sensors for perception: Essential for correctly perceiving

- Comparison metrics:

- Resolution

- Field of view

- Dynamic range: difference between the darkest and the lightest tones in an image

- other properties: focal length, depth of field and frame rate

- Trade-off between resolution and FOV

- stereo camera:

- The combination of two cameras with overlapping fields of view and aligned image planes

- allow depth estimation from synchronized image pairs

- Pixel values from image can be matched to the other image producing a disparity map of the scene. This disparity can then be used to estimate depth at each pixel.

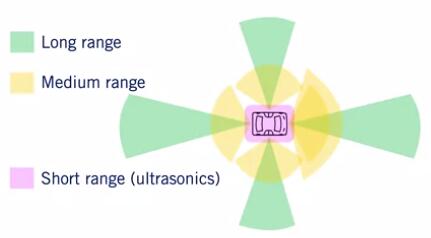

- Lidar:

- involves shooting light beams into the environment and measuring the reflected return.

- include a spinning element with multiple stacked light sources

- output detailed 3D scene geometry from LIDAR point cloud

- not effected by the environments lighting. So do not face the same challenges as cameras when operating in poor or variable lighting conditions

- Comparison metrics:

- Number of beams: 8, 16, 32, and 64

- Points per second: The faster the point collection, the more detailed the 3D point cloud can be.

- Rotation rate: The higher this rate, the faster the 3D point clouds are updated.

- Field of view

- Upcoming: Solid state LIDAR

- without a rotational component of the typical LIDARs

- to become extremely low-cost and reliable.

- RADAR

- radio detection and ranging

- robust object detection and relative speed estimation

- particularly useful in adverse weather as they are mostly unaffected by precipitation.

- Comparison metrics:

- range

- field of view

- position and speed accuracy

- Configurations:

- WFOV (Wide FOV), short range

- NFOV (Narrow FOV), long range

- Ultrasonic

- for sound navigation and ranging

- Short-range all-weather distance measurement

- Ideal for low-cost parking solutions

- Unaffected by lighting, precipitation

- Comparison metrics:

- Range

- FOV

- Cost

- GNSS/IMU:

- Global Navigation Satellite Systems (e.g. GPS or Galileo) and Inertial measurement units

- Direct measure of ego vehicle states

- position, velocity(GNSS): varying accuracies:RTK,PPP,DGPS

- angular rotation rate(IMU)

- acceleration(IMU)

- heading (IMU, GPS)

- Wheel odometry sensors

- Tracks wheel velocities and orientation

- Uses these to calculate overall speed and orientation of car

- speed accuracy

- position drift

Self-driving computing hardware

- Need a”self-driving brain”: main decision makingunit of the car

- Image processing, Object detection, Mapping

- GPUs - Graphic Processing Unit

- FPGAs - Field Programmable Gate Array

- ASICs - Application Specific Integrated Chip

- Synchronization hardware

- To synchronize different modules and provide a common clock

- GPS relies on extremely accurate timing to function, and as such can act as an appropriate reference clock when available.

- sensor measurements must be timestamped with consistent times for sensor fusion to function correctly.

- Sensor placement:

Hardware Configuration Design

Sensor coverage requirements for different scenarios

- Assumptions:

- Aggressive deceleration: $5 m/s^2$

- Comfortable deceleration: $2 m/s^2$

- This is the norm,unless otherwise stated

- Stopping distance: $d=\frac {v^2}{2a}$

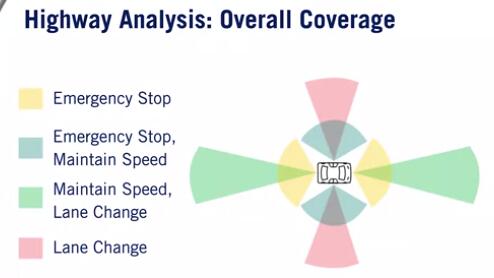

Highway driving

- Broadly,3 kinds of maneuvers: Emergency Stop, Maintain Speed, Lane change

- Emergency Stop:

- Longitudinal Coverage: Assume we are speeding at 120kmph

- Stopping distance could be ~110 metres; aggressive deceleration

- Lateral coverage: At least adjacent lanes, since we may change lanes to avoid a hard stop.

- Maintain Speed

- Relative speeds are typically less than 30 kmph.

- Longitudinal coverage: At least~100 metres in front.

- Both vehicles are moving, so don’t need to look as far as emergency-stop case.

- Lateral Coverage: Usually current lane Adjacent lanes would be preferred for merging vehicle detection.

- Lane Change

- summary:

Urban driving

- Broadly,6 kinds of maneuvers:

- Emergency Stop, Maintain Speed, Lane Change

- Overtaking, Turning,crossing at intersections, Passing roundabouts

- Overtaking:

- Longitudinal coverage: if overtaking a parked or moving vehicle, need to detect oncoming traffic beyond point of return to own lane.

- Lateral coverage: always need to observe adjacent lanes. Need to observe additional lanes if other vehicles can move into adjacent lanes.

- Intersections:

- Observe beyond intersection for approaching vehicles, pedestrian crossings, clear exit lanes.

- Requires near omni-directional sensing for arbitrary intersection angles.

- Roundabouts:

- Lateral coverage: Vehicles are slower than usual, limited range requirement.

- Longitudinal coverage: Due to the shape of theroundabout, need a wider field of view.

- Summary:

Overall coverage,blind spots

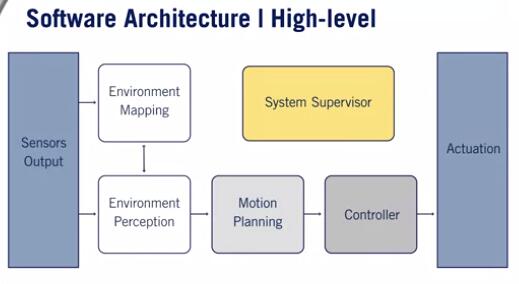

Software Architecture

the basic architecture of a typical self-driving software

Identify the standard software decomposition

- Environment Perception

- Localization: GPS/IMU/Wheel Odometry

- Dynamic object detection -> Dynamic object tracking -> Object Motion Prediction: LIDAR, cameras, Radar

- Static object detection: HD road Map

- Environment Mapping

- Occupancy Grid Map: Object tracks, LIDAR

- Localization Map

- Detailed Road Map

- Motion Planning

- Mission Planner

- Behavior planner

- Local planner

- Controller

- Velocity controller: throttle percentage, brake percentage

- Steering controller: steering angle

- System Supervisor

- Hardware supervisor: sensor outputs

- Software supervisor: Environment perception, environment mapping, motion planning, controller

Environment Representation

Localization point cloud or feature map

- localization of vehicle in the environment.

- Collects continuous sets in LIDAR

- The difference between LIDAR maps is used to calculate the movement of the autonomous vehicle

Occupancy grid map

- collision avoidance with static objects.

- Discretized fine grain grid map

- Can be 2Dor 3D

- Occupancy by a static object

- Trees and buildings

- Curbs and other non drivable surfaces

- Dynamic objects are removed

Detailed road map

- path planning

- traffic regulation, lane boundaries

- 3 Methods of creation:

- Fully Online

- Fully Offline

- Created Offline and Updated Online